About

Hi there! I am Xiaoyang Lyu (吕晓阳), a fourth-year PhD student in the CVMI Lab at the University of Hong Kong, supervised by Prof. Xiaojuan Qi. I am currently a visiting student at the University of Cambridge, supervised by Prof. Shangzhe (Elliott) Wu and working closely with Ruining Li and Matt Zhou.

My research vision is to bridge the gap between the physical and digital worlds by replicating complex physics, geometry, and material properties within simulators. I believe that high-fidelity world modeling is the key to advancing embodied AI and creating agents that are truly helpful in the real world. I also work closely with Xin Kong, Zizhang Li, Yi-Hua Huang, and Yang-Tian Sun on these endeavors.

While my previous work focused on rule-based and feed-forward reconstruction and rendering, I am now exploring how large generative models and scaling laws can revolutionize traditional computer vision tasks. I aim to build a synergy between robust 3D pipelines and generative power, using traditional methods to ensure physical accuracy while leveraging generation to scale intelligence.

I am actively seeking industry roles or post-doc opportunities related to Embodied AI and World Models. Feel free to contact me.

Publications

Articraft: An Agentic System for Scalable Articulated 3D Asset Generation

M Zhou#*, R Li*, X Lyu*, Z Song*, Z Huang*, C Zheng, C Rupprecht, A Vedaldi, S Wu

arXiv preprint arXiv:2605.15187, 2026

Stabilizing Streaming Video Geometry via Dynamic Feature Normalization

X Lyu, M Liu, X Wu, R Wang, YH Huang, YT Sun, S Shi, X Qi

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2026

X Han, M Liu, Y Chen, J Yu, X Lyu, Y Tian, B Wang, W Zhang, J Pang

IEEE International Conference on Robotics and Automation, 2026

C Shi, S Shi, X Lyu, C Liu, K Sheng, B Zhang, L Jiang

The Fourteenth International Conference on Learning Representations, 2026

DiST-4D: Disentangled Spatiotemporal Diffusion with Metric Depth for 4D Driving Scene Generation

J Guo, Y Ding, X Chen, S Chen, B Li, Y Zou, X Lyu, F Tan, X Qi, Z Li, ...

Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025

Deformable radial kernel splatting

YH Huang, MX Lin, YT Sun, Z Yang, X Lyu, YP Cao, X Qi

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2025

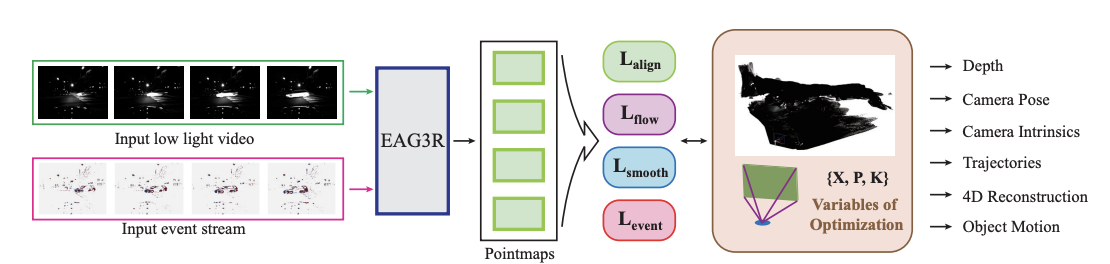

EAG3R: Event-Augmented 3D Geometry Estimation for Dynamic and Extreme-Lighting Scenes

X Wu, Y Yu, X Lyu, Y Huang, B Wang, B Zhang, Z Wang, X Qi

Advances in Neural Information Processing Systems 38, 2025

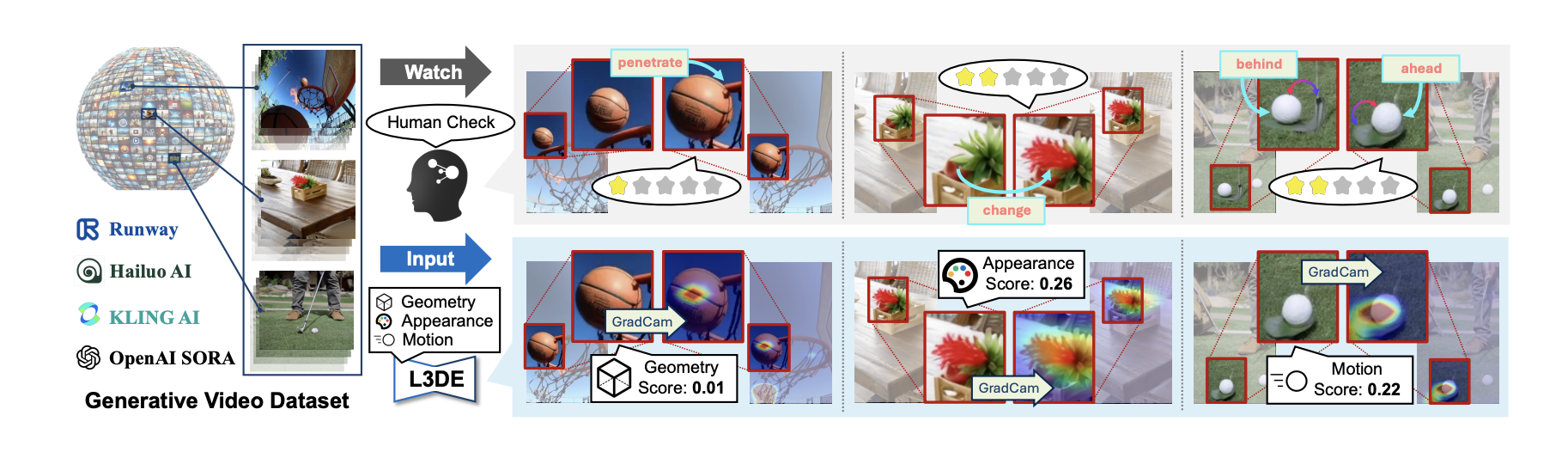

C Chang, J Liu, Z Liu, X Lyu, YH Huang, X Tao, P Wan, D Zhang, X Qi

Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025

Sc-gs: Sparse-controlled gaussian splatting for editable dynamic scenes

YH Huang, YT Sun, Z Yang, X Lyu, YP Cao, X Qi

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024

3dgsr: Implicit surface reconstruction with 3d gaussian splatting

X Lyu, YT Sun, YH Huang, X Wu, Z Yang, Y Chen, J Pang, X Qi

ACM Transactions on Graphics (TOG) 43 (6), 1-12, 2024

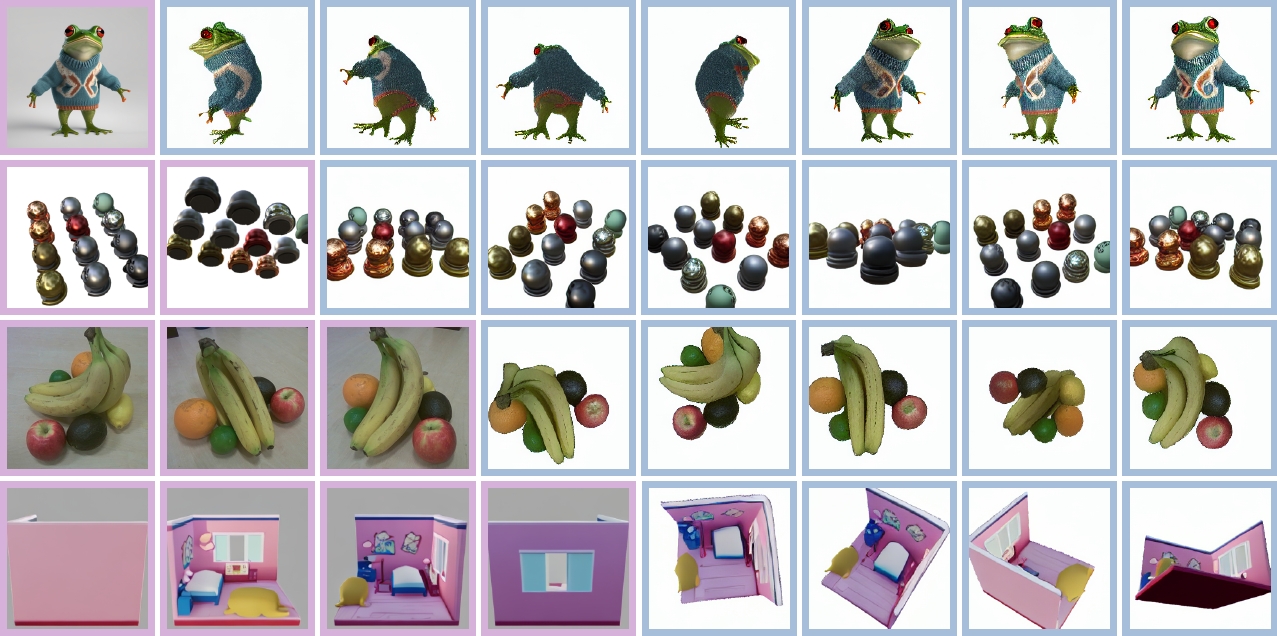

Eschernet: A generative model for scalable view synthesis

X Kong*, S Liu*, X Lyu, M Taher, X Qi, AJ Davison

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024

Splatter a video: Video gaussian representation for versatile processing

YT Sun, Y Huang, L Ma, X Lyu, YP Cao, X Qi

Advances in Neural Information Processing Systems 37, 50401-50425, 2024

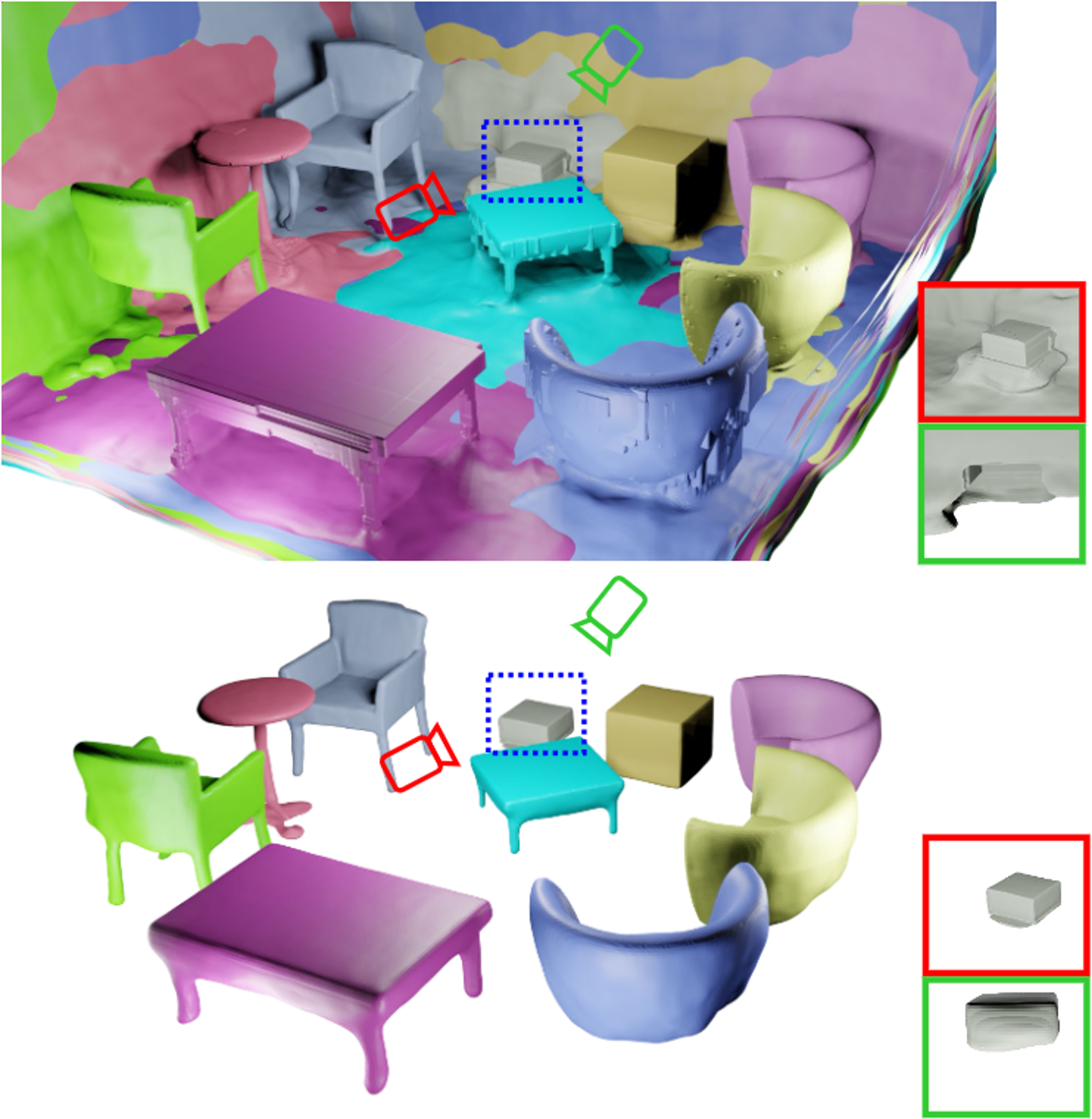

Total-Decom: Decomposed 3D Scene Reconstruction with Minimal Interaction

X Lyu, C Chang, P Dai, YT Sun, X Qi

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024

Spec-gaussian: Anisotropic view-dependent appearance for 3d gaussian splatting

Z Yang, X Gao, YT Sun, Y Huang, X Lyu, W Zhou, S Jiao, X Qi, X Jin

Advances in Neural Information Processing Systems 37, 61192-61216, 2024

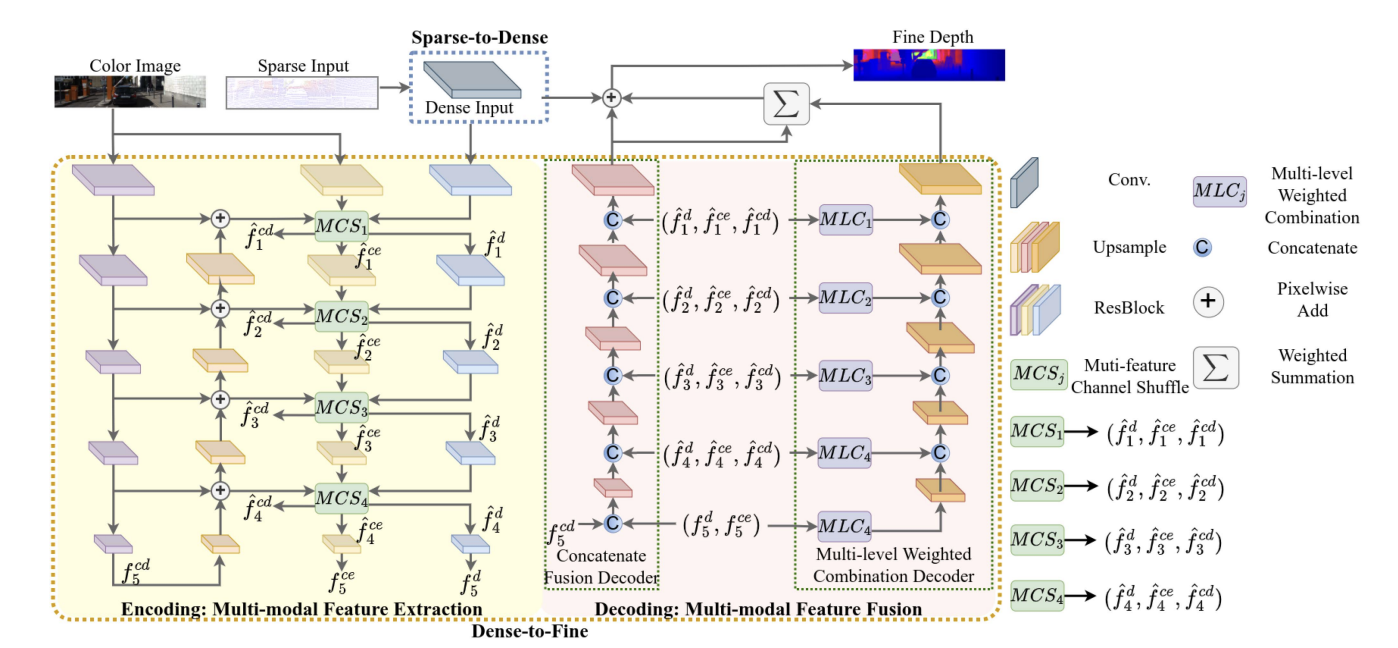

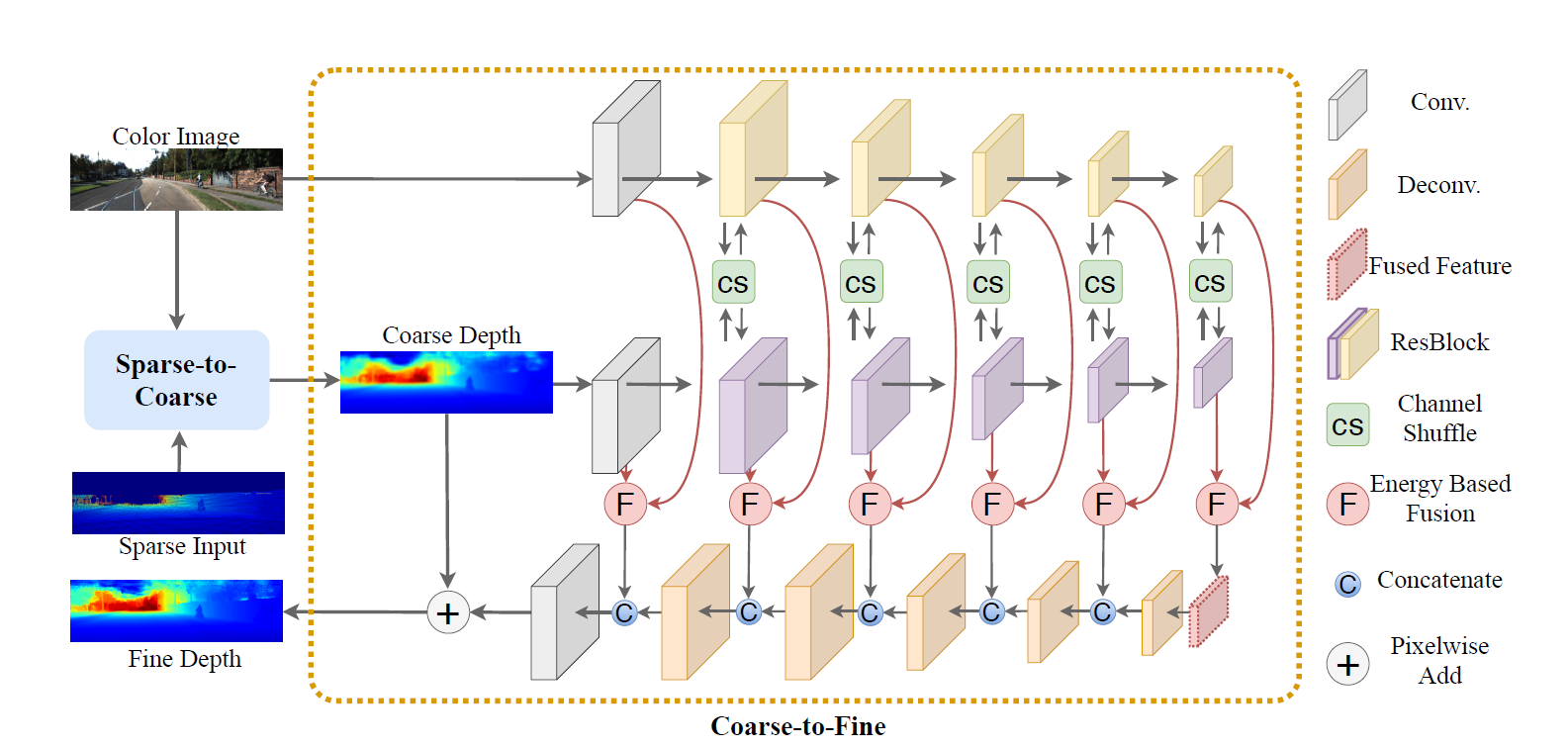

MFF-Net: Towards Efficient Monocular Depth Completion With Multi-Modal Feature Fusion

L Liu, X Song, J Sun, X Lyu, L Li, Y Liu, L Zhang

IEEE Robotics and Automation Letters 8 (2), 920-927, 2023

Hybrid Neural Rendering for Large-Scale Scenes with Motion Blur

P Dai, Y Zhang, X Yu, X Lyu, X Qi

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023

X Lyu, P Dai, Z Li, D Yan, Y Lin, Y Peng, X Qi

Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023

Efficient implicit neural reconstruction using lidar

D Yan, X Lyu, J Shi, Y Lin

IEEE International Conference on Robotics and Automation, 2023

RICO: Regularizing the Unobservable for Indoor Compositional Reconstruction

Z Li, X Lyu, Y Ding, M Wang, Y Liao, Y Liu

Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023

FCFR-Net: Feature Fusion based Coarse-to-Fine Residual Learning for Depth Completion

L Liu, X Song, X Lyu, J Diao, M Wang, Y Liu, L Zhang

Proceedings of the AAAI Conference on Artificial Intelligence 35 (3), 2136-2144, 2021

HR-Depth: High Resolution Self-Supervised Monocular Depth Estimation

X Lyu, L Liu, M Wang, X Kong, L Liu, Y Liu, X Chen, Y Yuan

Proceedings of the AAAI Conference on Artificial Intelligence. 35 (3), 2021

Services

- Reviewer for ICCV, ECCV, CVPR, TPAMI, Siggraph, TVCG, ICLR, ICML

Awards

- Hong Kong Presidential Scholarship

- National Scholarship

- World Champion for the ICRA 2018 AI Challenge